How can we help?

Let's Talk

Introduction

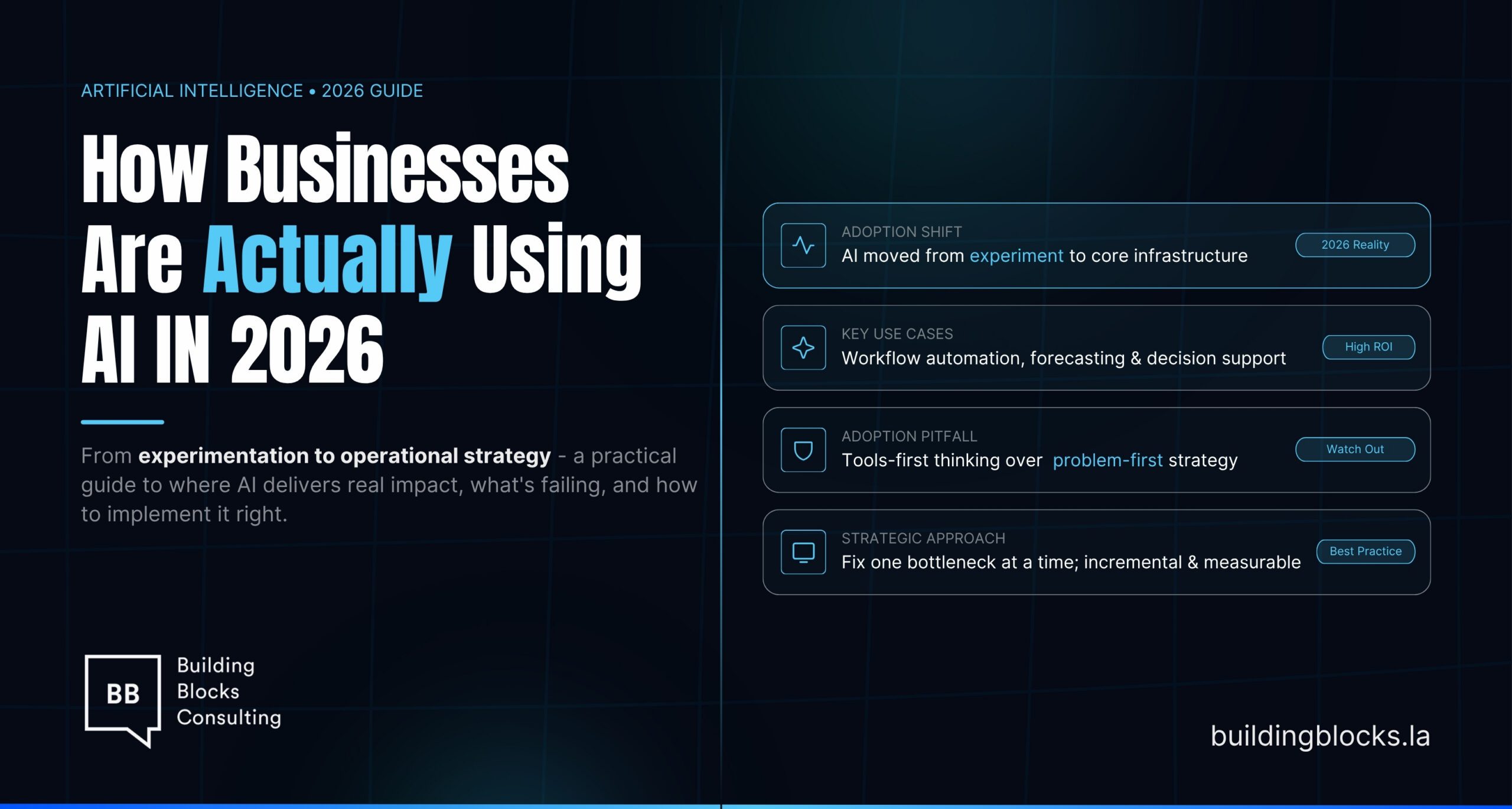

In the fast-evolving world of Artificial Intelligence (AI), building a Minimum Viable Product (MVP) has taken on a new level of complexity and opportunity. Traditional MVP frameworks often fall short when applied to AI-native products due to unique challenges such as data dependency, model performance variability, and the need for continuous learning. Enter the AI-PRD Blueprint, a comprehensive 4D framework—Discover, Design, Develop, Deploy—tailored specifically for AI-first product development.

In this comprehensive guide, we’ll unpack each phase of the AI-PRD Blueprint, explore key AI-specific considerations, and illustrate the journey with real-world examples. Whether you’re an AI startup founder, a product marketer, or a machine learning engineer, this guide will help you navigate the complexities of building AI products that deliver real user value.

The Unique Challenge of AI-First MVPs

Unlike traditional software products, AI products are not deterministic. This variability introduces new hurdles for teams accustomed to clear-cut product roadmaps. AI products can:

- Hallucinate: AI models can generate plausible but incorrect outputs.

- Degrade over time: As user behavior changes, model accuracy can decline.

- Be data-hungry: High-quality, annotated data is critical.

- Need specialized UX: Users interact with AI differently than with traditional apps.

These nuances demand a purpose-built framework for MVPs that can adapt to AI’s iterative and probabilistic nature.

Introducing the AI-PRD Blueprint: Discover, Design, Develop, Deploy

The AI-PRD Blueprint provides a structured yet flexible approach to navigate AI product development.

- Discover: Identify the real problem, data sources, and user pain points.

- Design: Architect the AI solution, from UX to model selection.

- Develop: Build, train, and test the AI model and supporting systems.

- Deploy: Launch the MVP, monitor performance, and iterate.

This 4D framework ensures that AI-native products are not only functional but also user-centric, ethical, and scalable.

Phase 1: Discover

Problem Discovery

The first step is deeply understanding the problem you’re solving. AI should not be a solution in search of a problem.

Example:

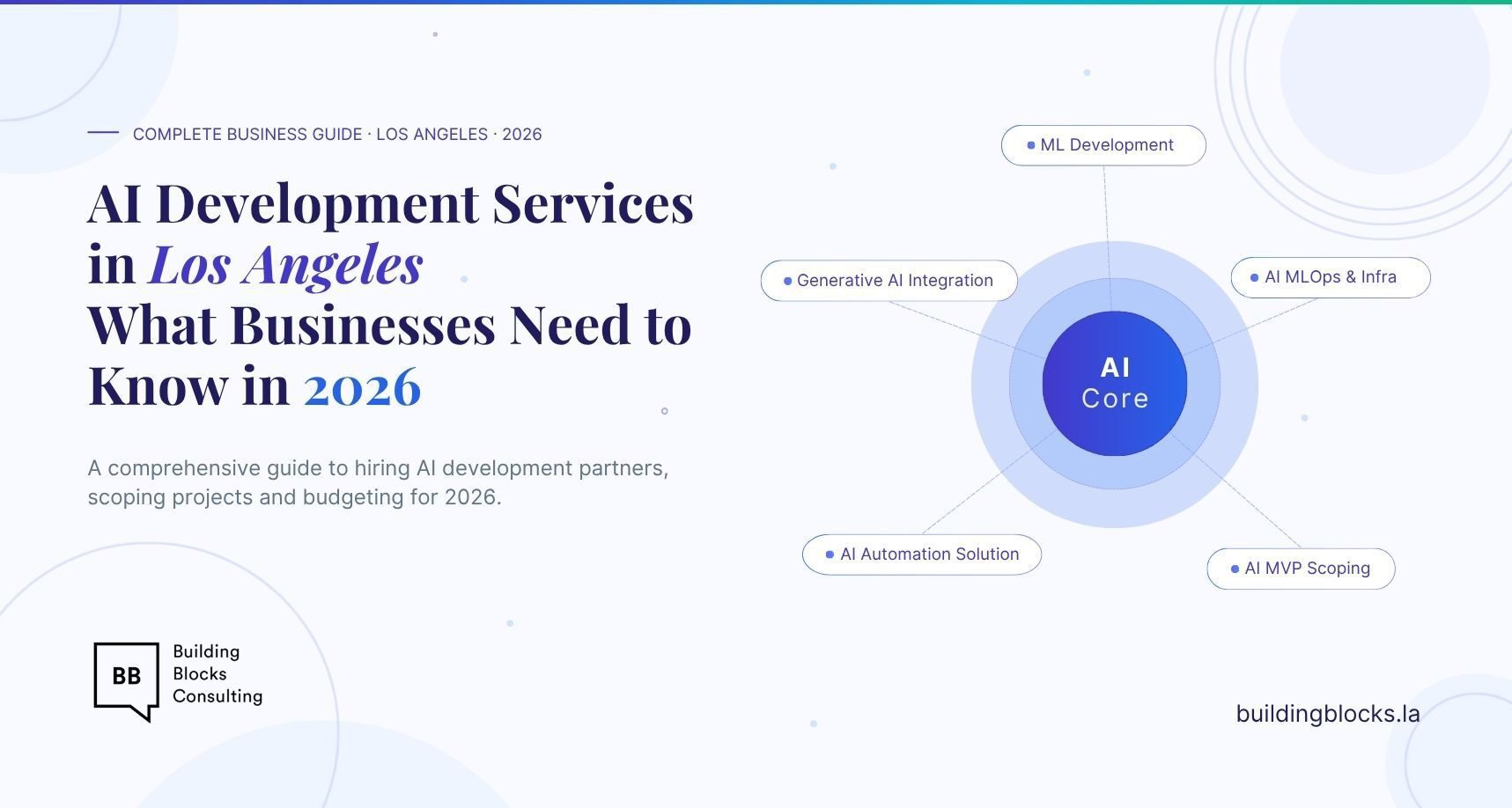

- Gong.io, a revenue intelligence platform, started by identifying the problem of sales teams not having actionable insights from call data.

Data Discovery

AI models are only as good as the data they train on. Identify:

- Data sources: Internal datasets, open data, APIs

- Data quality: Clean, annotated, representative

- Data ownership and privacy implications

Example:

- Tesla leverages data from its fleet to continuously improve its self-driving algorithms.

User Discovery

Conduct user interviews, surveys, and usage pattern analyses to understand:

- How users currently solve the problem

- Their openness to AI-driven solutions

Competitive Landscape

Identify existing solutions—both AI and non-AI—and assess their strengths and gaps.

Phase 2: Design

UX for AI Products

Designing AI UX requires handling uncertainty and setting user expectations.

- Explainability: Show why the AI made a decision.

- Fallback options: Offer manual alternatives when AI fails.

Example:

- Grammarly allows users to accept or reject AI suggestions, maintaining user control.

Model Selection and Experimentation

Choose between:

- Pre-trained models: e.g., GPT-4, BERT

- Fine-tuning: Customizing models for domain-specific tasks

- RAG Pipelines: Combining LLMs with external knowledge bases

Data Strategy and Annotation

Develop a robust data labeling strategy, possibly using Human-in-the-Loop (HITL) for continuous feedback.

Defining Success Metrics

Define AI-specific metrics early:

- Accuracy, precision, recall

- Hallucination rate

- Response latency

- User satisfaction scores

Phase 3: Develop

Model Training and Fine-Tuning

Use transfer learning and domain adaptation to improve performance with less data.

Example:

- Hugging Face Transformers provide pre-trained models that can be fine-tuned on niche datasets.

Retrieval-Augmented Generation (RAG) Pipelines

Combine LLMs with real-time data retrieval for contextually accurate outputs.

Example:

- Perplexity.ai uses RAG to provide sources alongside AI-generated answers.

Human-in-the-Loop (HITL)

Integrate human feedback loops for:

- Quality assurance

- Continuous model improvement

System Integration and Testing

Test across multiple scenarios to ensure:

- Model robustness

- Bias mitigation

- Security and privacy compliance

Phase 4: Deploy

Model Deployment and Serving

Deploy models via:

- API endpoints

- Cloud-based platforms like AWS SageMaker, Google Vertex AI

Continuous Monitoring and Evaluation

Monitor for:

- Model drift

- Latency spikes

- Unexpected failure cases

Post-Launch Feedback Loops

Gather user feedback to:

- Retrain models

- Improve UX

- Identify new features

Scaling the AI Infrastructure

Ensure the backend can scale with user growth, leveraging:

- Kubernetes for orchestration

- Feature stores for data management

AI-Specific Metrics for MVP Success

Key performance indicators (KPIs) for AI MVPs include:

- Model Accuracy: Is the model predicting correctly?

- Hallucination Rate: Frequency of false outputs

- User Retention: Are users returning?

- Engagement Rates: Interaction frequency

- Latency: Response times under load

- Data Feedback Loops: Volume and quality of feedback data

Real-World Case Studies

Case Study 1: Jasper AI

Built a content generation platform using fine-tuned GPT models, focusing on marketing copy. Jasper emphasized UX by providing templates and adjustable creativity levels.

Case Study 2: Klarna’s AI Assistant

Processed millions of queries within weeks of launch. Used HITL to train responses and scaled rapidly due to robust post-launch feedback loops.

Case Study 3: Gong.io

Combines NLP with domain-specific data to analyze sales calls, delivering actionable insights that improve sales performance.

Final Thoughts: Evolving Beyond the MVP

An AI MVP is not a one-and-done project. Continuous learning, data feedback, and model retraining are essential for product-market fit and scalability.

Future-Proofing AI Products:

- Regularly update models with new data

- Stay compliant with evolving AI regulations

- Invest in explainability and ethical AI

By following the AI-PRD Blueprint, product teams can systematically build, launch, and scale AI-first MVPs that not only work technically but delight users, secure trust, and deliver business value.

By