How can we help?

Let's Talk

Most AI projects don’t fail because the model is weak. They fail because the system around the model cannot survive real-world use.

At the leadership level, this distinction is critical. A model that works in a demo or pilot environment is not the same as a system that runs daily, serves customers, handles bad data, respects compliance rules, and stays within budget. Yet many organizations still hire AI developers as if they are hiring researchers, not system builders.

When companies decide to hire AI developers, they are often influenced by academic credentials, buzzwords, or benchmark scores. Those signals feel reassuring, but they rarely predict production success. Production AI is not about intelligence. It is about reliability, ownership, and accountability.

This is where hiring needs to change.

Why production AI forces a different hiring mindset

In experimentation, failure is expected and cheap. In production, failure is operational and visible.

Once an AI system goes live, it becomes part of your core infrastructure. It affects customers, internal teams, and financial outcomes. When it fails, the business pays through downtime, incorrect decisions, lost trust, or regulatory exposure.

Many AI initiatives stall because the people building them have never owned systems beyond the training phase. They know how to build a model, but not how to keep it alive.

When you hire AI developers for production systems, you are not hiring for intelligence alone. You are hiring for judgment.

Software engineering is the silent backbone of production AI

One uncomfortable truth for many leaders is that advanced AI knowledge matters less than strong software engineering once systems reach production.

Production AI lives inside applications, services, and workflows. It must integrate cleanly, fail safely, and evolve without breaking everything around it. That requires developers who think in terms of systems, not scripts.

Many candidates can build impressive models but struggle to explain how those models run inside a real application. They cannot articulate how updates happen, how failures are handled, or how their code is maintained by others.

That gap is where production AI breaks.

Strong AI developers write code that survives handoffs, audits, and long-term ownership. Weak ones write code that works only while they are present.

Data reality is harsher than most AI demos suggest

In production, data is never clean, stable, or predictable.

Customer behavior changes. Inputs arrive late. Fields go missing. Bias creeps in quietly. The model rarely fails loudly; it degrades slowly, and by the time the business notices, trust is already damaged.

When you hire AI developers, you must look for people who understand this reality. They should expect data issues, design for them, and monitor them continuously. They should know that data pipelines are not plumbing; they are the foundation.

Leaders often underestimate this skill because it is invisible when done well. But when done poorly, it becomes the most expensive problem in the system.

A practical way to distinguish production-ready AI developers

At this point, most executives ask the same question: How do we tell who is truly production-ready? The difference usually shows up in how candidates talk about their work. The table below captures a pattern seen repeatedly in real production environments.

This contrast matters because production systems reward discipline, not brilliance alone.

Deployment and MLOps are where most AI projects stall

Training a model is rarely the hardest part. Keeping it useful over time is.

Once deployed, models age. Data drifts. Assumptions break. Without proper monitoring and retraining, performance slowly degrades. This decay is subtle and dangerous because it often goes unnoticed until outcomes suffer.

Production-ready AI developers understand that deployment is not the end of the project. It is the beginning of responsibility.

They design systems that can be observed, updated, rolled back, and improved without disruption. They treat models as living components, not finished artifacts.

For leadership, this capability directly affects continuity. AI systems without lifecycle management eventually become frozen and unusable.

Cost is not a finance problem; it is a design problem

AI infrastructure can be expensive, and poor technical decisions surface quickly in cloud bills.

The difference between a sustainable AI system and an unsustainable one often comes down to early design choices: model complexity, inference frequency, infrastructure selection, and scaling strategy.

Strong AI developers think about these constraints early. They understand that accuracy gains must justify their cost. They design systems that scale with demand, not ahead of it.

When you hire AI developers, listen carefully to how they talk about trade-offs. If cost awareness feels like an afterthought, it will become your problem later.

Security and compliance cannot be added later

AI systems increasingly touch regulated data and sensitive decisions. In production, security and compliance are not optional layers; they are design constraints.

Developers who have only worked in sandbox environments often underestimate this. They focus on functionality first and assume governance can be added later. In reality, retrofitting compliance is expensive and risky.

Production-ready AI developers think about access controls, audit trails, and explainability from the start. They understand that trust is as important as performance.

From an executive standpoint, this reduces long-term exposure and prevents unpleasant surprises.

Business understanding separates builders from partners

The most valuable AI developers are not the most technical ones. They are the ones who understand the business problem clearly enough to say no.

Production AI must serve outcomes, revenue, efficiency, speed, or risk reduction. Developers who lack business context tend to overbuild, overcomplicate, and underdeliver.

When you hire AI developers, evaluate how they frame success. Do they speak in terms of metrics that matter to the business, or only technical benchmarks?

Developers who understand business trade-offs become partners. Those who do not become cost centers.

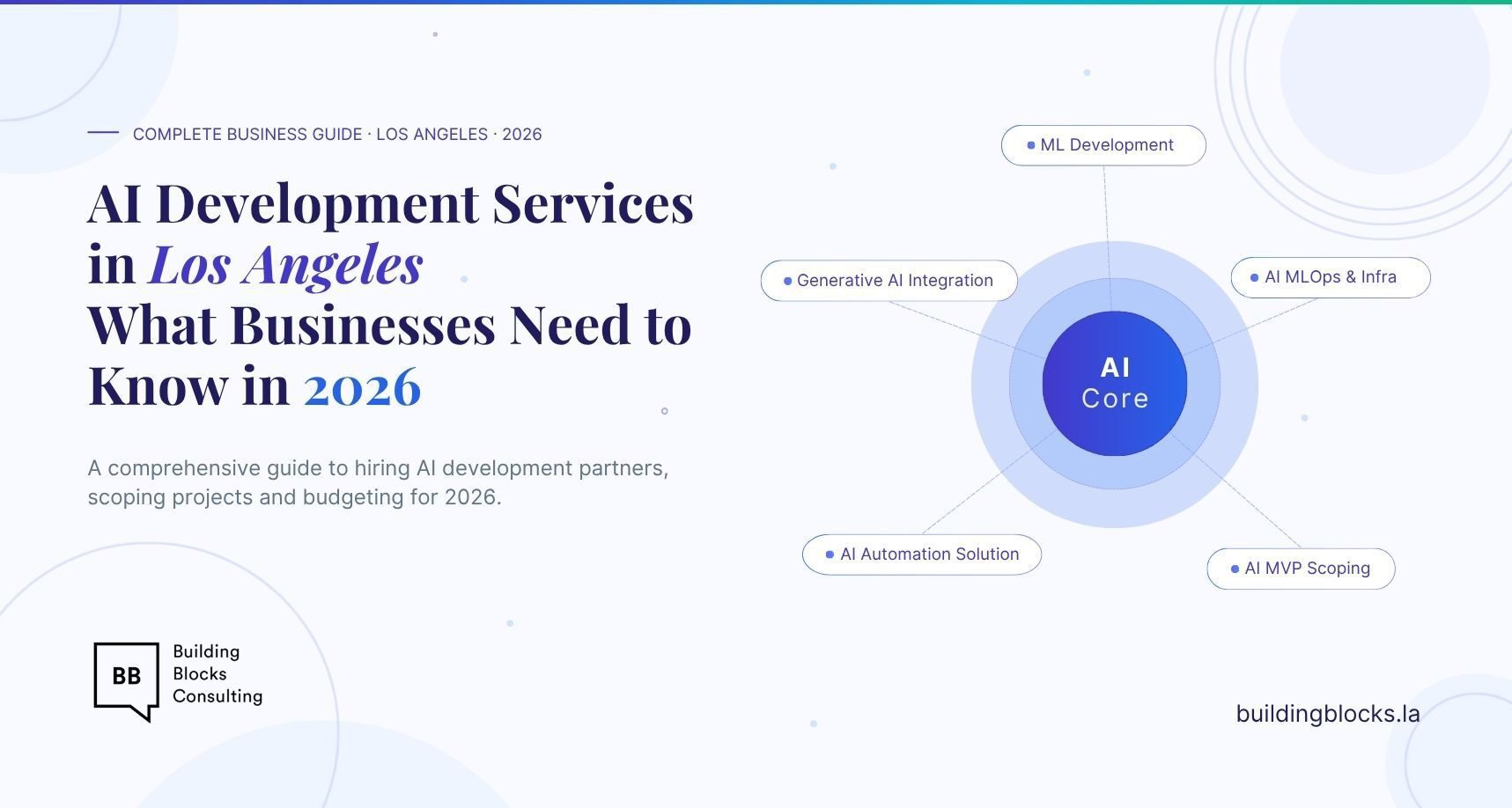

Hiring strategy matters as much as talent

Many organizations assume the only path forward is hiring full-time AI talent internally. In reality, this is often the slowest and riskiest route, especially early on.

Working with teams that have already built production AI systems allows companies to avoid common mistakes and accelerate learning. These teams bring patterns, not just people.

For organizations looking to hire AI developers with strong Python and production system experience, structured delivery teams can provide faster, safer outcomes than trial-and-error hiring.

A practical option to hire AI developers with proven production experience is available here:

This approach allows leadership to focus on business results while technical foundations are handled correctly.

Final perspective for senior leadership

Production AI is not about being impressive. It is about being dependable.

When you hire AI developers, you are deciding whether AI becomes a durable capability or a recurring problem. The skills that matter most are not the loudest ones, but the quiet ones: engineering discipline, data realism, cost awareness, and business judgment.

Companies that succeed with AI hire for stability first and sophistication second.

That decision, more than any algorithm, determines whether AI delivers real value.

By